Smoke: fine-grained lineage at interactive speed

Data lineage connects the input and output data items of a computation.

A backward lineage query selects a subset of output records and asks “which input records contributed to these results?”

A forward lineage query selects a subset of input records and asks “which output records depend on these inputs?”

Lineage-enabled systems capture record-level relationships throughout a workflow and support lineage queries.

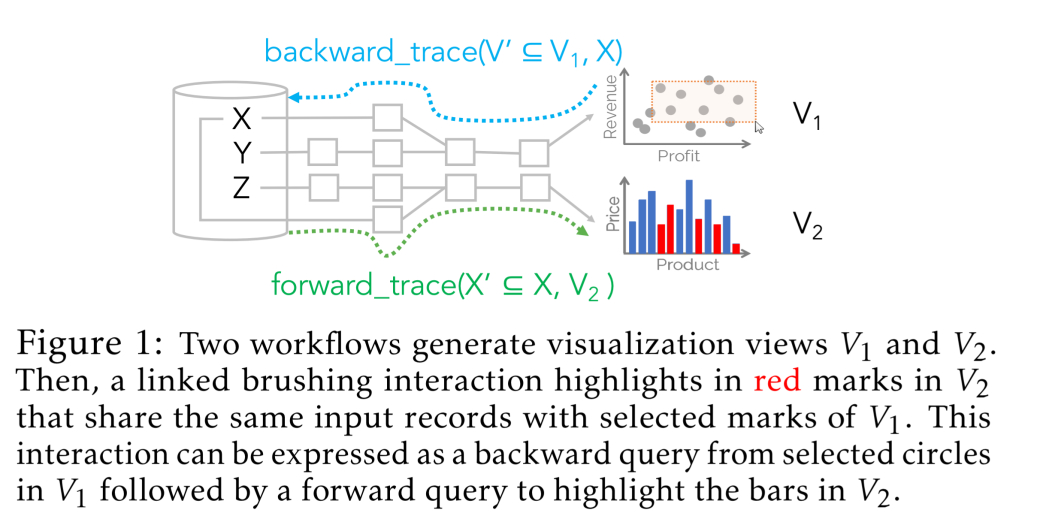

Example application: interactive visualization

- the same set of data powers two visualization graphs

v1andv2 - selecting a point in

v1highlights the associated data inv2

- usually implemented manually

- equivalently achieved by a backward lineage query from

v1and using those results in a forward lineage query intov2

Challenges with existing lineage capture systems

- space/time tradeoffs

- can slow down base query to capture + store lineage information (materialization) during a query to speed up later queries, or vice versa

Smoke’s four principles

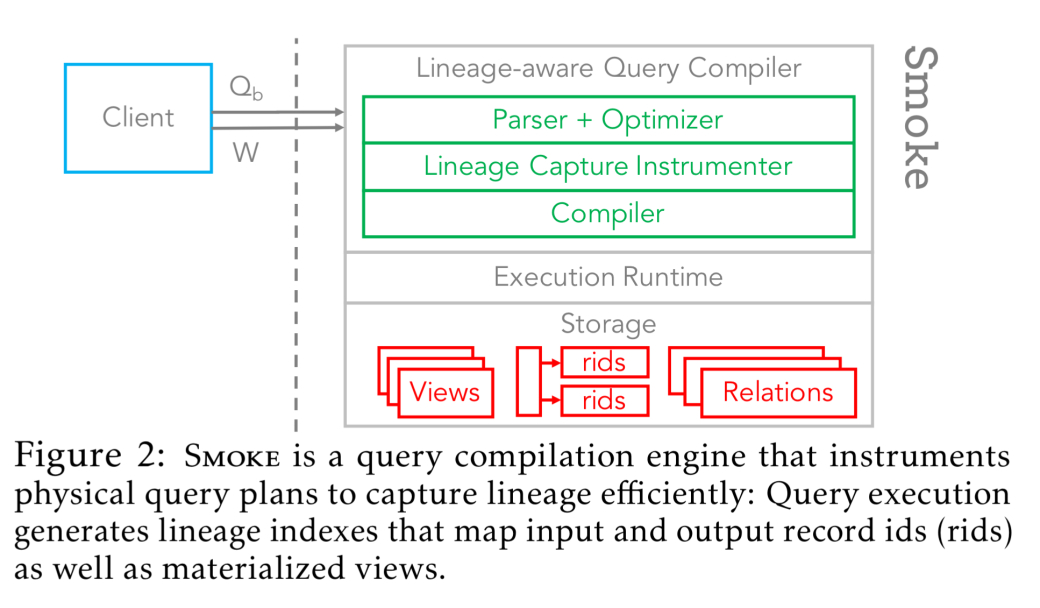

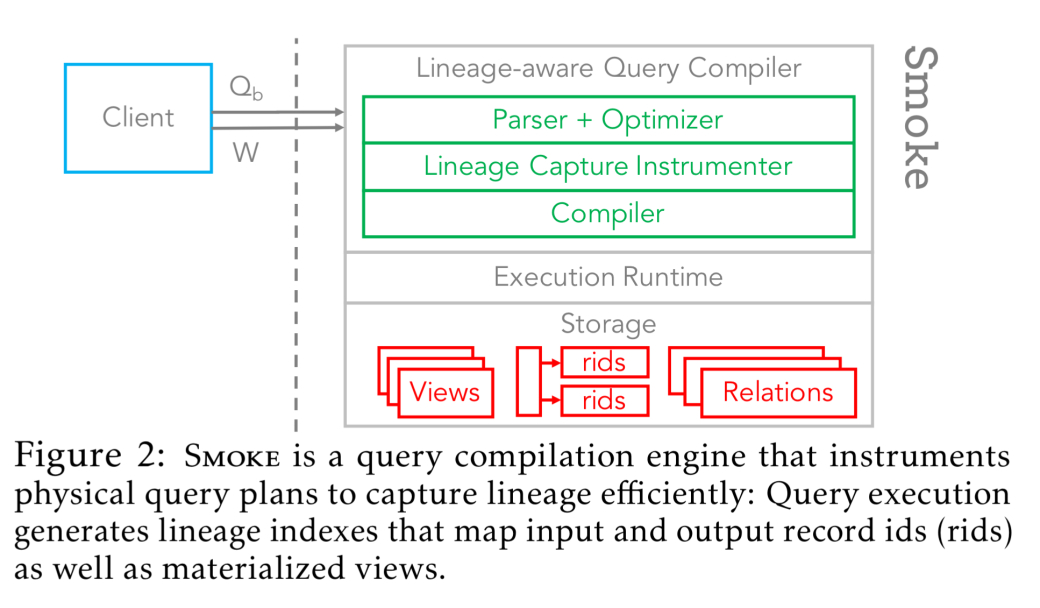

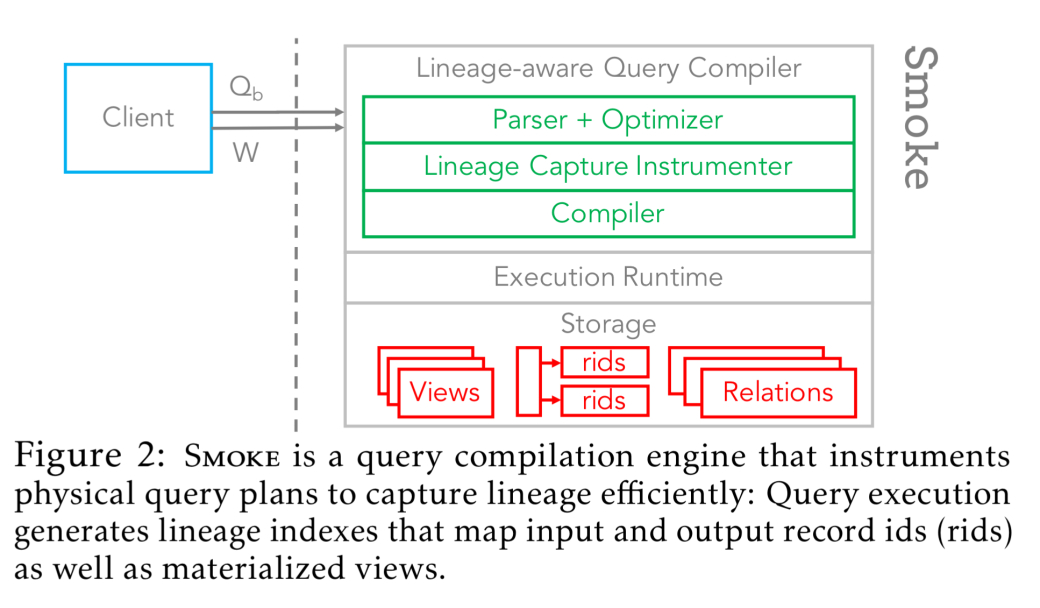

As data processing engines become faster, an important question —and the main focus of this paper— is whether it is possible to achieve the best of both worlds: negligible lineage capture overhead, as well as fast lineage query execution.

Smoke tries to achieve this with four principles:

- tight integration of lineage capture into query execution using write-efficient data structures

- when knowledge of lineage queries is available (ex. visualisation tool explorations), this knowledge is used to minimize the amount of lineage that needs to be materialized

- when knowledge of lineage queries is available and they only require aggregated information, then potentially materialize aggregate statistics as we process queries, and prune or re-partition lineage indices

- wherever possible, data structures constructed during normal operation execution are augmented and reused for lineage purposes rather than introducing separate dedicated structures

Lineage capture

Smoke uses read/write-efficient index structures based on row ids to capture lineage information between inputs and outputs

- 1-to-N relationships are represented as inverted indexes – ith entry corresponds to ith output group, pointing to a row id array of lineage inputs

- 1-to-1 relationships all share a single array

Figure 3 illustrates this pretty well:

Index population achieved via tight integration of lineage capture and relation operator logic. Depending on the operator and circumstance, we use defer or inject strategy.

Inject incurs full cost of index generation during base query.

Defer defers (portions of) capture until after the query execution.

Consider selection:

- while iterating over relation evaluating the predicate, inject uses two counters to track row ids of current input and output to update indices when an output row is emitted

Consider hash joins:

- Smoke generates backward row id arrays and forward row id indexes

- inject might trigger re-allocations of forward indexes in some cases

- defer takes advantage of knowing forward index sizes after probe phase

In multi-operator queries, Smoke propagates lineage information through such that only input and final output relations are emitted.

Workload-aware optimizations

When set of lineage queries are known beforehand, Smoke can apply:

instrumentation pruning: disables capture for indexes not used in the workload

optimization push-down: for queries that apply summarization/aggregation before results

Evaluation

Smoke lineage capture technique outperforms logical and physical approaches and speeds up lineage query evaluation

Real world results:

- lineage expresses many real-world tasks from visualization to data profiling, that are currently implemented manually in ad-hoc ways

- lineage capture mechanism is fast enough to avoid sacrificing performance vs. manual implementations and in come cases perform better

Smoke illustrates that it is possible to both capture lineage with low overhead and enable fast lineage query performance… Our capture techniques and workload-aware optimization make Smoke well-suited for online; adaptive; and offline physical database design settings.